LinkedIn publishing with adaptive voice learning. No other tool on the market learns your voice from usage. · View lab →

LinWheel

Be seen. Be heard. Be elsewhere.

LinkedIn for founders who'd rather be founding. Your agent learns your voice, optimizes engagement, and runs distribution while you sleep.

Demo

The full workflow in Claude Code

Results

0

LinkedIn sessions per week

my agent logs in so I don't have to. I stay in my terminal where it's safe.

1

tool no one else has

adaptive voice learning from approve/reject signals. the drafts get better because the system actually measures what works.

any

MCP client

Claude Code, Cursor, OpenClaw, Windsurf. if it speaks MCP, LinWheel works inside it.

You know you should post on LinkedIn. You haven't in weeks. Maybe months. The activation energy is absurd: open the site, stare at the text box, write something that doesn't make you cringe, give up, close the tab.

You're not lazy. You shipped three features this week. You just can't make yourself perform on a platform that feels like a high school popularity contest.

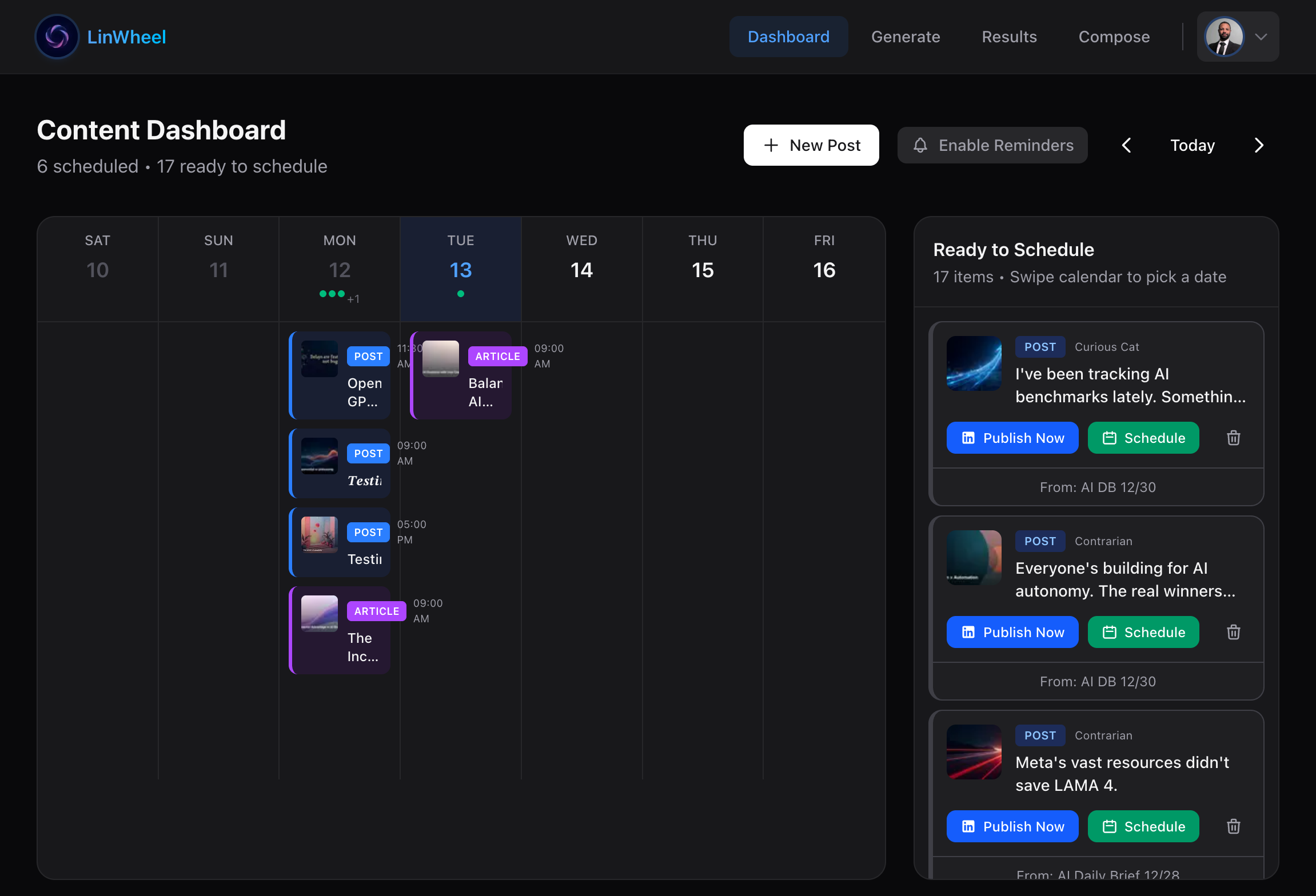

LinWheel makes that fine. It's an MCP server: You install one npm package, point your agent at it, and the agent gets tools for turning raw ideas into LinkedIn-native content. It reshapes your idea through seven content angles, generates branded cover images, and schedules the result. All of it happens inside whatever environment you already use: Claude Code, Cursor, OpenClaw.

You never leave your terminal. The better you get at your actual work, the more material your agent has to write about. Your craft is the input; a social presence is the output.

Our Agents (Actually) Learn to Speak

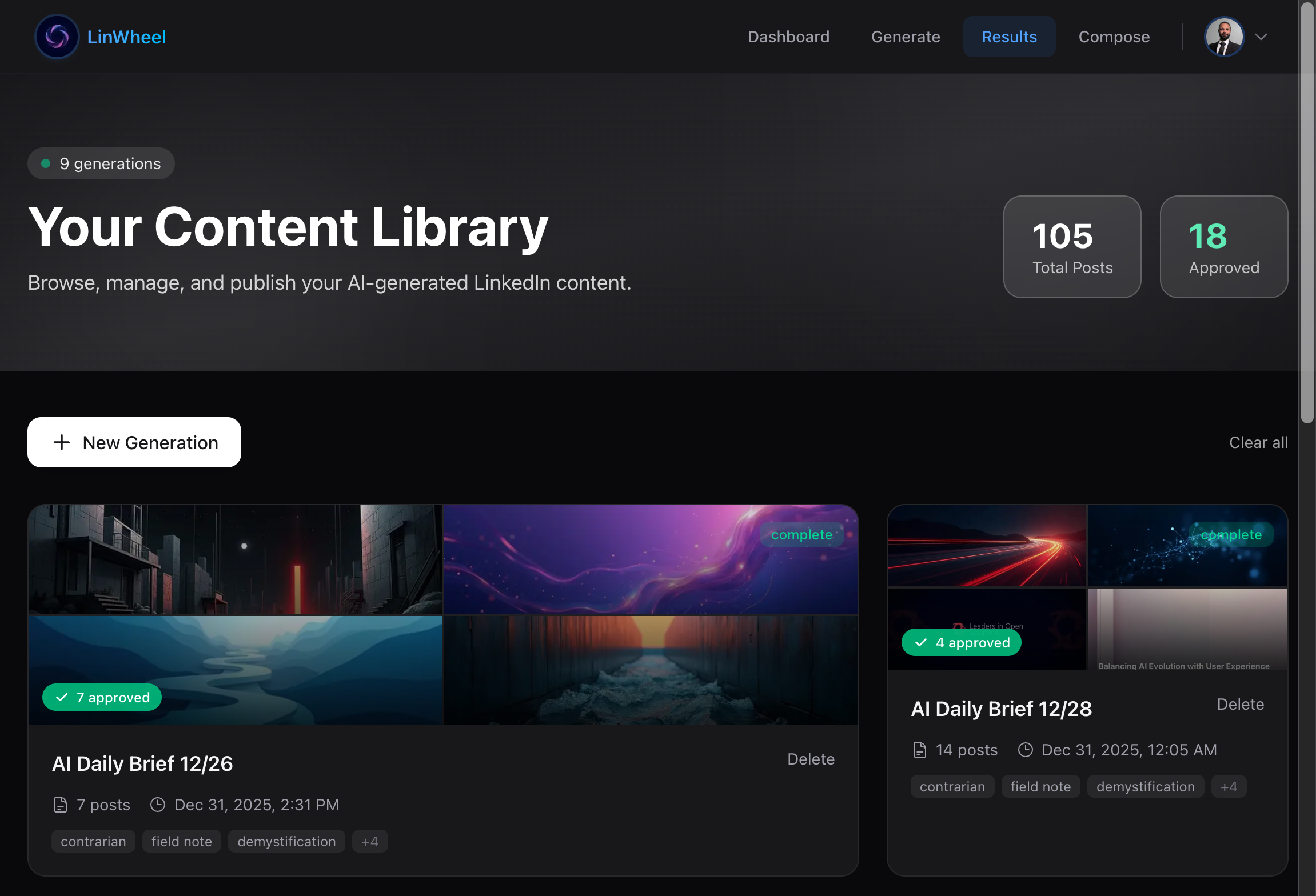

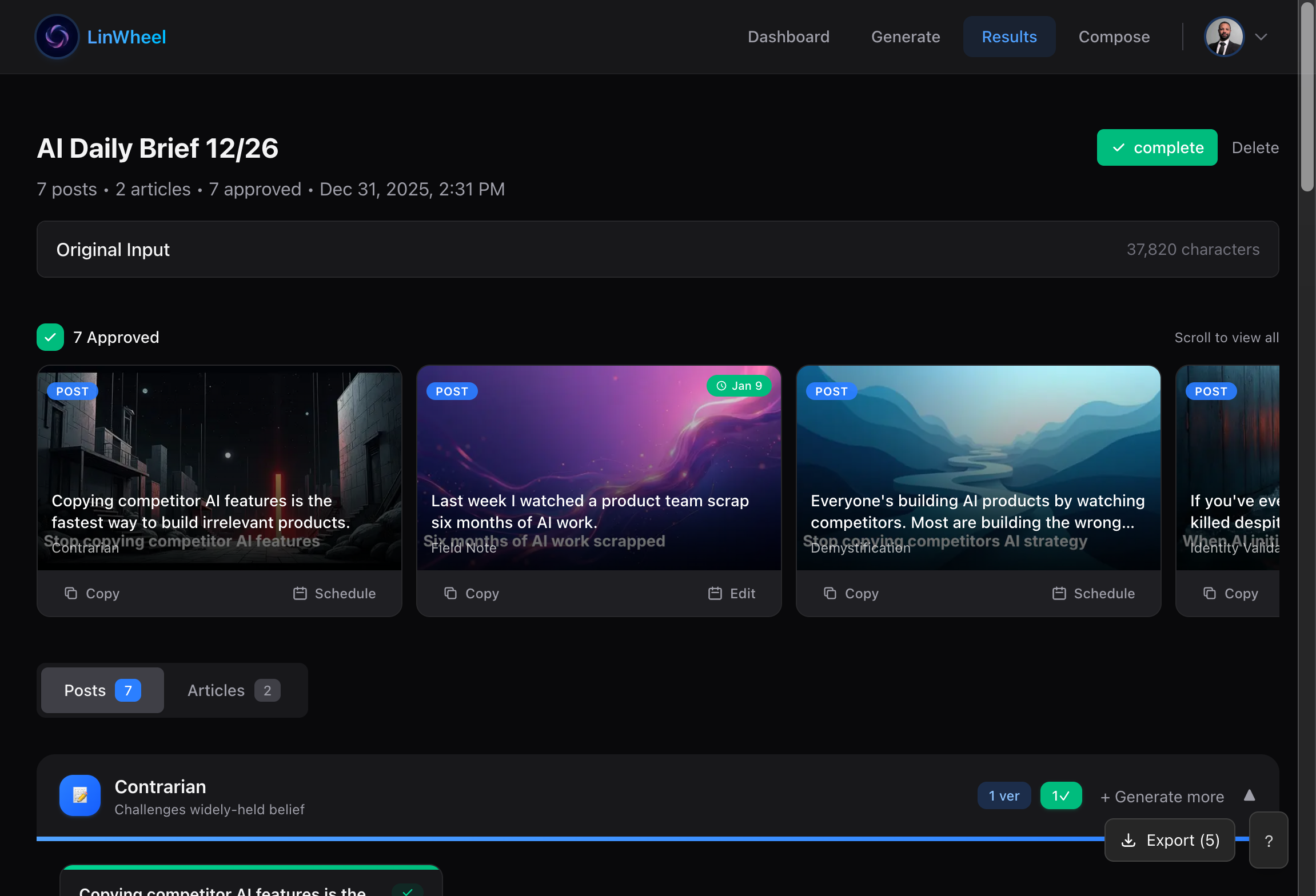

Voice profiles are the part that's actually novel. You create a named profile and the agent learns to match it. After a few runs through the loop, the drafts stop reading like they were written by three LLMs in a trench coat and more like a draft you'd actually pen.

Even after convergence, nothing publishes without you: post_approve has to succeed before post_schedule will run. A hallucinated LinkedIn post goes to your professional network with your name on it. You can't quietly roll that back. The human-in-the-loop isn't a toggle you can disable. It's structural.

Slides from Scratch

Document posts on LinkedIn see significantly more engagement than text or image posts. That's why LinWheel handles generating cover images and carousel slides to match your content: your logo positioned and scaled to spec, your brand colors applied consistently.

We don't use AI to generate images. There are no external image API calls and no per-image costs. Cover images and carousel slides are rendered locally with Satori and resvg-wasm: branded gradient backgrounds with typographic overlays, deterministic output. Two images rendered a month apart for the same brand style look like they came from the same designer, because the rendering is deterministic.

For Engineers

Architecture

LinWheel speaks MCP over stdio. The agent decides how to traverse the tool pipeline. There's no enforced workflow. The only hard gate is post_approve before post_schedule.

The learning layer is powered by qortex. Each user trains their own posterior distribution: approve or reject a draft, and the system updates its beliefs about which content angles work for your voice. Feedback never leaks between accounts.

Key Decisions

Why MCP?

Content tools that live behind their own UI create a context gap. You describe what you shipped, paste in your notes, re-explain your project. An MCP server runs inside the agent that already has that context.

The agent knows what you built because it built it with you. LinWheel gives it the tools to write about it. The demo video above shows the full flow.

More on the shift from standalone app to MCP-first →

Why Learning Instead of Templates?

Tone dropdowns give you five adjectives and a prayer. Voice profiles learn from what you actually approve and reject. The drafts converge on your voice because the system measures what works, not because someone wrote a better prompt.

The full mechanism →

Why Local Rendering?

External image generation means per-call costs, rate limits, and brand drift. Two cover images generated a week apart can look like different companies.

Satori + resvg gives us deterministic, on-brand rendering at zero marginal cost. We render typographic compositions, not AI art. For LinkedIn, consistency beats novelty.

What Was Hard

LinWheel itself was a fairly easy build. The hard part was turning a single-machine learning engine into infrastructure that multiple users could share.

We use qortex to power LinWheel's learning. It started as a local library providing a knowledge graph with Thompson Sampling baked in. Initially, it ran inside one process on one machine, because we built it to work with a single local agent before realizing it should run as a general service supporting multiple users with independent models and memory.

That meant extracting qortex into a distributed backend with a proper API, Postgres-backed storage, and consumer isolation. The migration broke assumptions we'd baked in about single-process state at every layer.

The open problem is feedback sparsity combined with cold start. Most posts get approved, meaning the bandit's posterior is skewed positive and the additional time required to collect sufficient rejection signals slows convergence on voice-matched output. New profiles with fewer than ~20 reference posts produce noticeably generic drafts. The bandit needs data, and no amount of prompt engineering eliminates the cold-start period. We're exploring whether richer feedback (partial edits, not just approve/reject) can accelerate convergence.

Stack

Demo