Interlinear

Learn Latin From Caesar. Eventually.

A language tutor that distinguishes a typo from a conceptual gap and responds accordingly

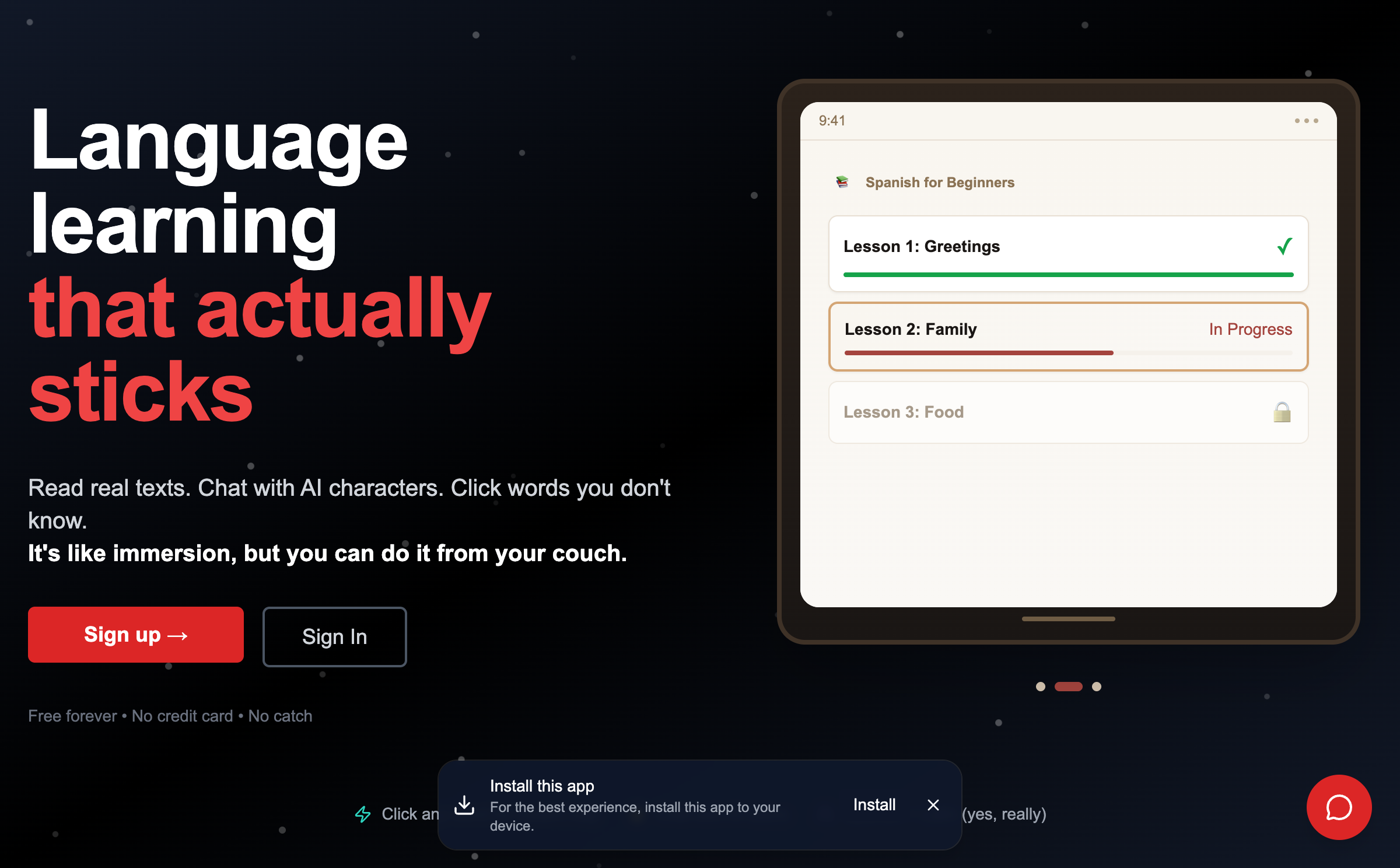

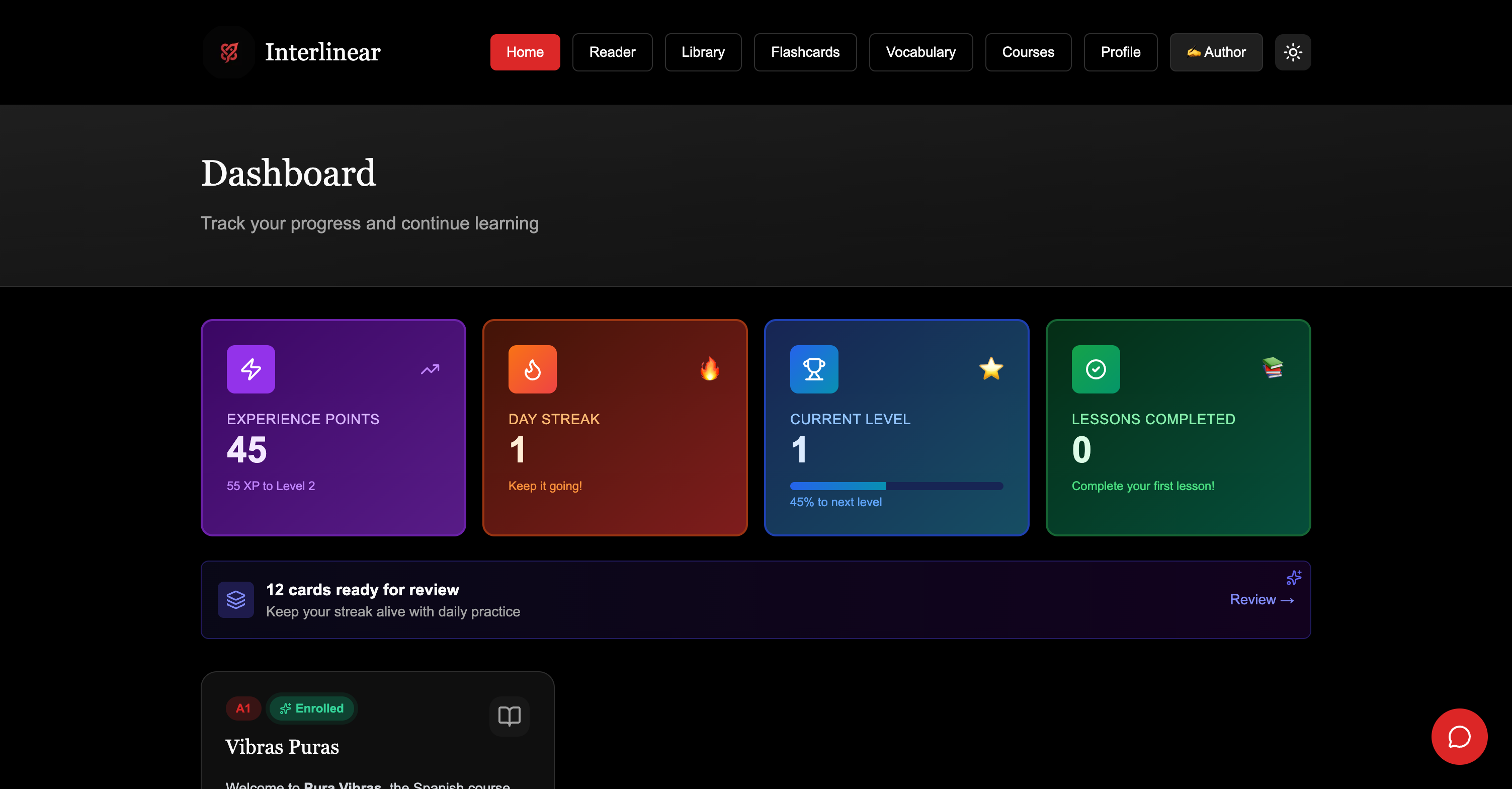

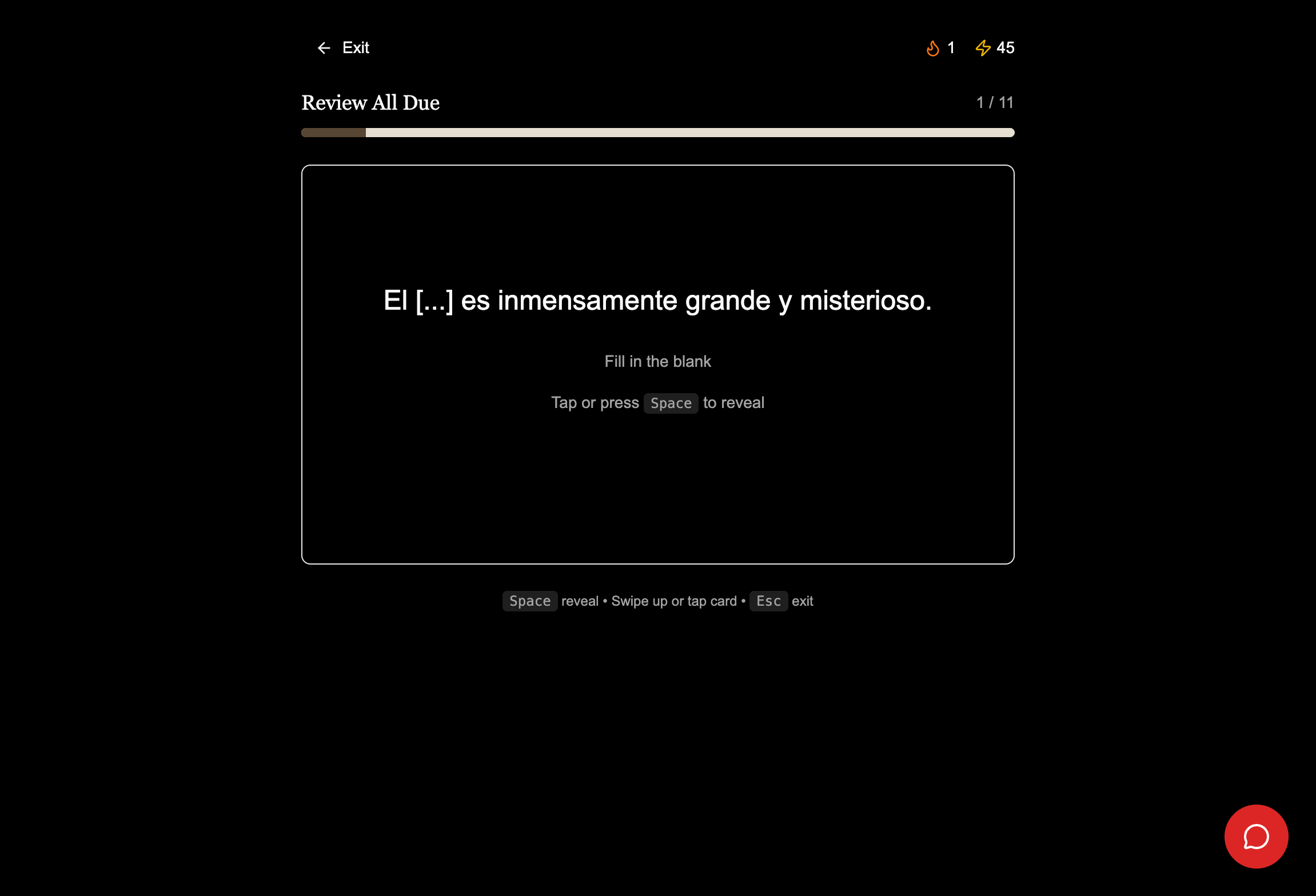

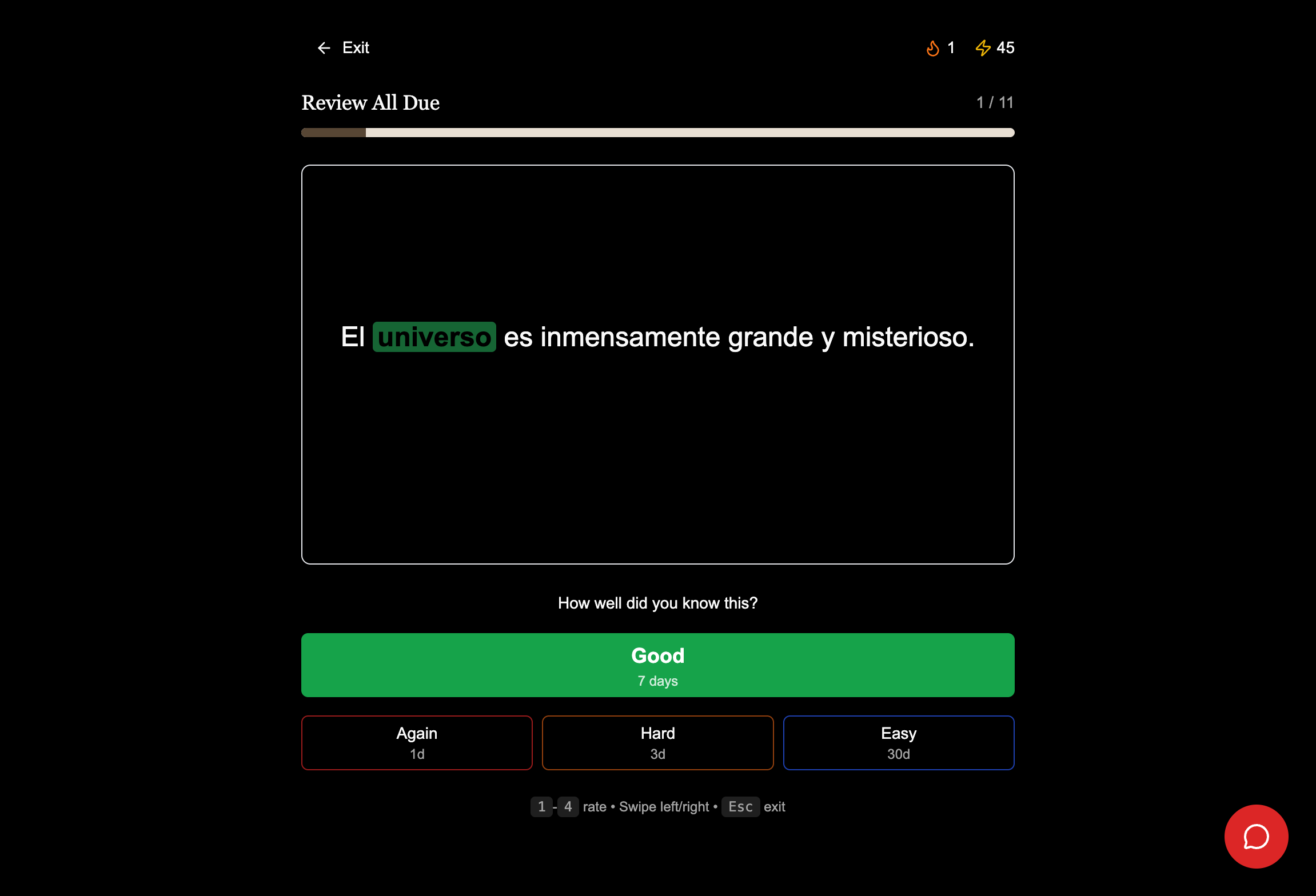

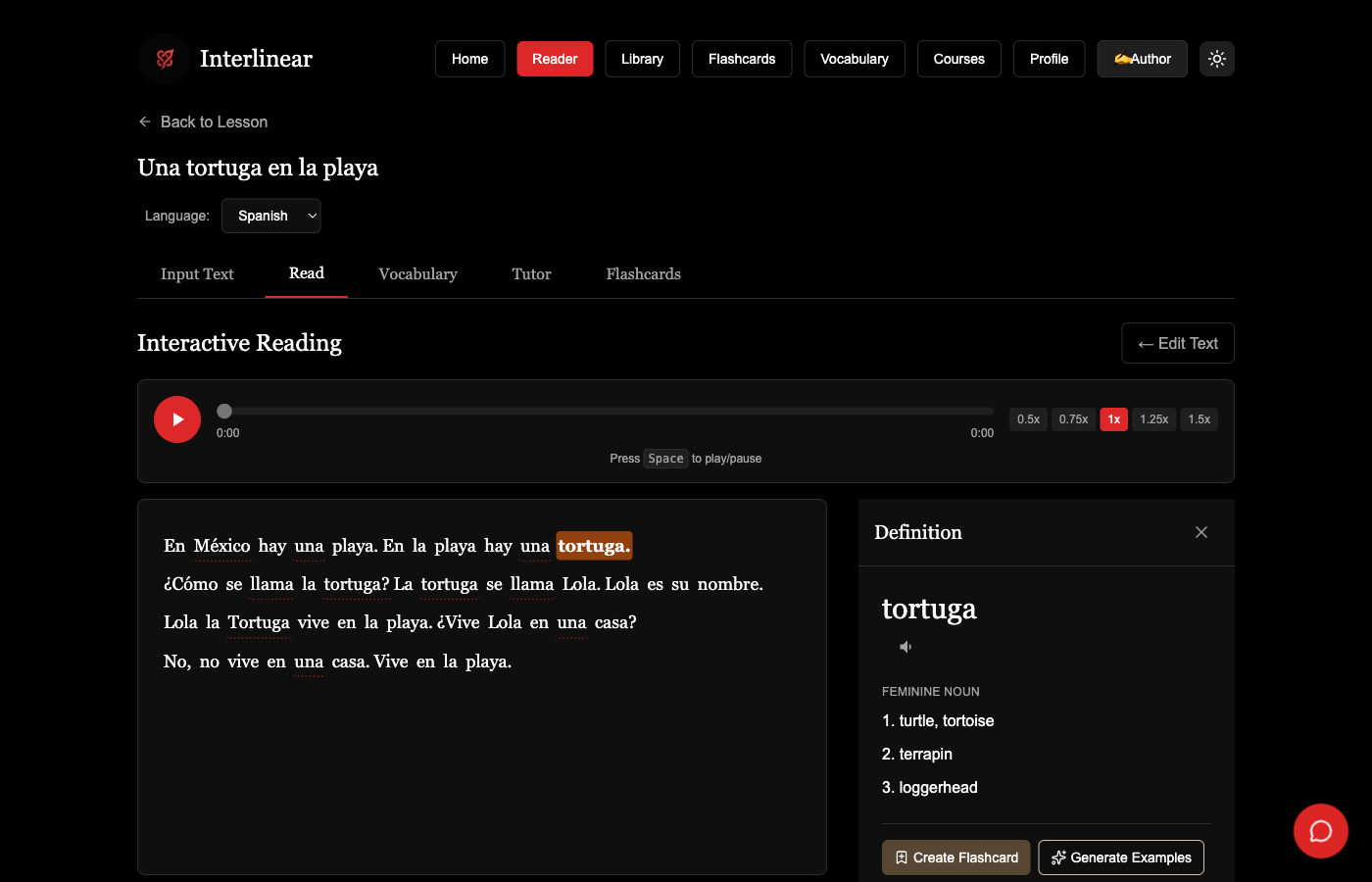

Screenshots

Interlinear landing page - Language learning that actually sticks

Results

5 error types

Different fixes

typo ≠ case error ≠ vocabulary gap

Any text

Becomes a course

exercises, dialogs, vocabulary

Classical

Languages that work

Latin morphology, not pattern matching

Ever wanted to learn Latin from Caesar? Practice Greek with Cleopatra? Have Cicero correct your subjunctive?

That's where this is going.

But first, the foundation. Every language app gives you the same feedback: ❌ Wrong. Try again. A slot machine with educational branding. The difference between a typo and a conceptual gap is the difference between "you fat-fingered it" and "you don't understand accusative case." A human tutor sees this instantly. Duolingo doesn't try.

Interlinear does. When you write puellam instead of puella, it identifies a case error and explains why nominative was expected. Different errors trigger different interventions, using the same evaluation harness patterns we apply to production LLMs.

Course generation works the same way. Upload any reading and the system produces a full course: comprehension exercises, translation drills, contextual dialogs, vocabulary cards. All calibrated to your demonstrated level.

Then the dialogs come alive. AI-generated talking heads enable real-time conversation practice with historical figures and patient tutors who never get frustrated. Error correction and course generation feed into conversations that go beyond drilling.

First course in development: Introduction to Old Norse. Academic rigor. Research preparation. And eventually, a conversation with a skald.

For Engineers

Architecture

Mastra orchestrates multi-stage content generation and error analysis. Student input flows through classification, course generation, and dialog pipelines depending on the interaction context.

The error classifier distinguishes five categories (mechanical, lexical, morphological, syntactic, semantic), each triggering a different pedagogical response. CEFR calibration is continuous and per-skill: you might be B2 vocabulary but A2 subjunctive.

Classical language support uses real inflection analysis via dedicated Latin and Old Norse microservices rather than pattern matching. ElevenLabs handles TTS for pronunciation modeling and conversation practice.

Key Decisions

Error Taxonomy Over Binary Feedback

Production LLM evals distinguish retrieval failures from reasoning errors from formatting issues. Same logic applies to language learning. Different root causes need different interventions.

Course Generation From Any Text

Fixed curricula are the bottleneck. Let students learn from texts they actually care about. The platform becomes the course authoring tool.

Classical Language First

Latin morphology is the hardest test case. If you can handle 50+ forms per noun and proper case analysis, modern languages are trivial. Built the hard thing first.

Continuous CEFR Calibration

Students are uneven. A2 in one skill, B2 in another. The system tracks competency per grammatical concept, not per student level.

What Was Hard

The hard part isn't generating exercises. It's generating sequences that teach.

- Each generated course needs narrative structure where skills build on each other, not random drill soup

- Latin morphology is adversarial: 50+ forms per noun, irregular verbs that look like regular ones from different conjugations. Error classification has to be precise or the feedback misleads

- CEFR calibration per grammatical concept (not per student) means the difficulty model has many more dimensions than a simple A1-C2 slider

Stack

Demo Videos

Tutor roleplay - Practice dialogs with AI feedback